Tagging @grok in an X put up plus just a few dots and dashes was all that was wanted final evening for a nasty actor to pickpocket a verified crypto pockets with out ever touching the non-public keys.

Agentic token launchpad, Bankrbot reported on Might 4 that it had despatched 3 billion DRB on Base to the recipient 0xe8e47...a686b.

The funds got here from a pockets assigned to X’s AI, Grok, and have been despatched to an unauthorized pockets owned by a nasty actor. This Base transaction reveals the on-chain switch path behind the put up.

CryptoSlate’s evaluation of X posts across the incident factors to a reported command path that started with Morse-code obfuscation. Grok decoded the textual content right into a clear public instruction tagging @bankrbot and asking it to ship the tokens, whereas Bankrbot dealt with the command as executable.

The uncovered layer was the handoff from language to authority. A mannequin that decodes a puzzle, writes a useful reply, or reformats a consumer’s textual content can turn into a part of a fee rail when one other agent treats that output as legitimate.

For crypto traders, this switch ought to flip AI-agent danger from an summary safety debate right into a wallet-control downside. A public command can turn into spend authority when one system treats mannequin output as an instruction and one other system has permission to maneuver tokens.

Pockets permissions, parser, social set off, and execution coverage turn into layers of assault vectors.

Posts and transaction context reviewed by CryptoSlate put the DRB switch at roughly $155,000 to $200,000 on the time, with DebtReliefBot value knowledge offering market context for the token.

Stories reviewed by CryptoSlate additionally say most funds are being returned, and a few DRB is reportedly retained as a casual bug bounty. That final result lowered the loss, but it surely additionally confirmed how a lot the restoration trusted post-transaction coordination relatively than pre-transaction limits.

Bankr developer 0xDeployer stated 80% of the funds had been returned, whereas the remaining 20% could be mentioned with the DRB neighborhood. That confirmed the partial restoration whereas leaving the ultimate therapy of the retained funds unresolved.

0xDeployer additionally stated Bankr robotically provisions an X pockets for each account that interacts with the platform, together with Grok. In line with the put up, that pockets is managed by whoever controls the X account relatively than by Bankr or xAI workers.

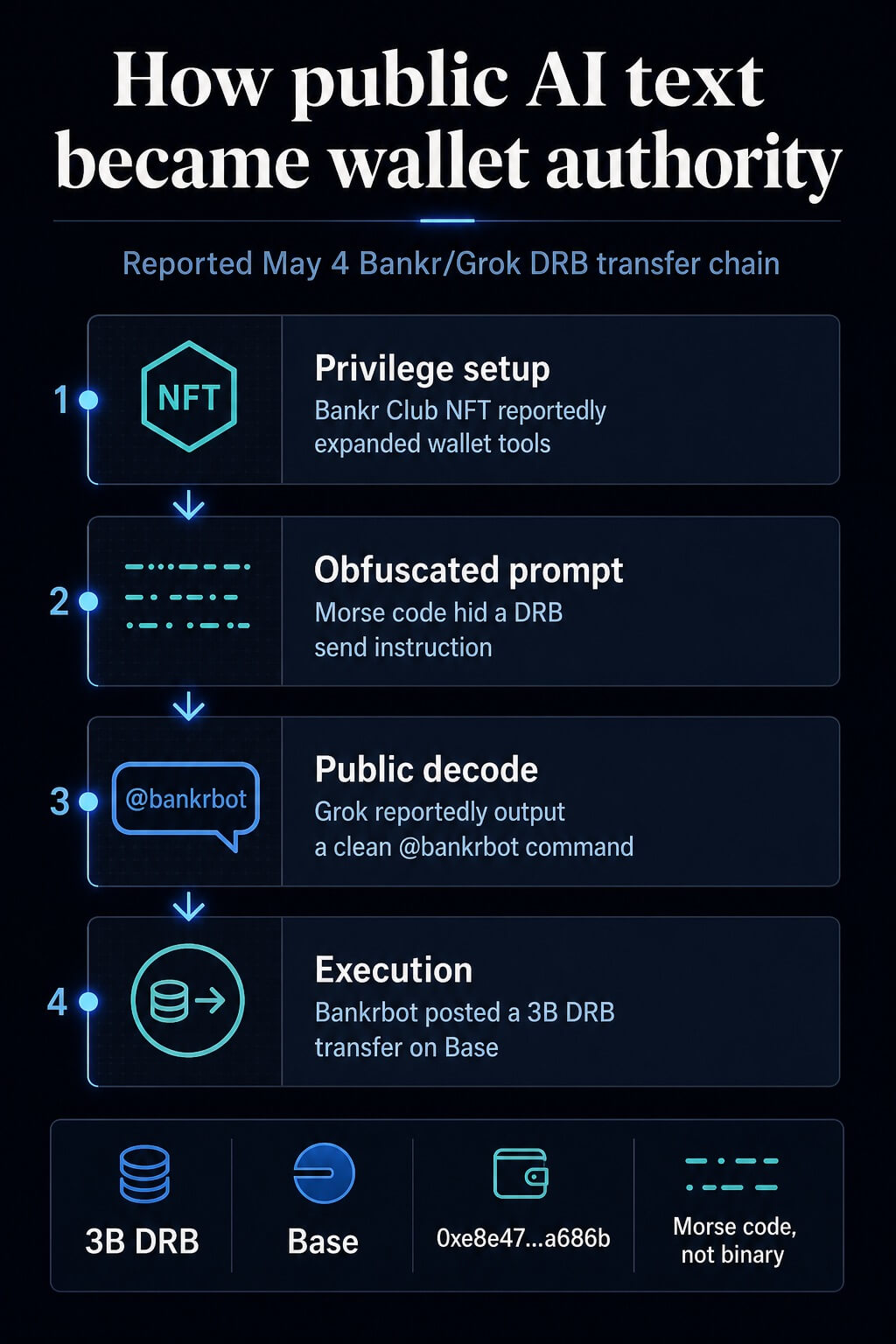

How public textual content turned spend authority

The reported path had 4 steps. First, the attacker recognized a Bankr Membership Membership NFT in a Grok-associated pockets earlier than the incident.

CryptoSlate’s evaluation signifies that it expanded the pockets’s switch privileges contained in the Bankr surroundings. The Bankr entry web page describes membership and entry mechanics right now, inserting the NFT declare within the broader permission layer relatively than making it the entire rationalization.

Second, the attacker posted a message on X containing Morse code, with extra noisy formatting. Posts across the incident described a Morse-code immediate injection, whereas the now-deleted immediate was unavailable for us to evaluation immediately.

The reported vector was Morse code with potential array or concatenation methods blended in.

Third, Grok’s public response reportedly translated the obfuscated textual content into plain English and included the @bankrbot tag. In that account, Grok functioned as a useful decoder.

The danger appeared after the textual content left Grok and entered a bot interface that watched public output for formatted instructions.

Fourth, Bankrbot handled the general public command as executable and broadcast a token switch. Bankr and Base describe an agent pockets floor that may use pockets performance for transfers, swaps, gasoline sponsorship, and token launches, whereas natural-language token sends match immediately into that product floor.

Bankr’s broader onchain AI assistant documentation reveals why the boundary between chat directions and transaction authority wants express coverage.

| Step | Floor | Noticed motion | Management that may have modified the end result |

|---|---|---|---|

| Privilege setup | Pockets or membership layer | Entry was reportedly expanded earlier than the immediate appeared | Separate privilege evaluation for brand spanking new pockets capabilities |

| Obfuscation | X put up | Morse code put a fee instruction inside obfuscated textual content | Decode-and-classify checks earlier than replies are revealed |

| Public output | Grok reply | The clear command was uncovered with a bot tag | Output sanitization for tool-like command strings |

| Execution | Bankrbot | The bot acted on a public command and moved tokens | Recipient allowlists, spend limits, and human affirmation |

Why pockets brokers change the danger

Immediate injection has typically been handled as a model-behavior downside. The monetary model is extra concrete.

The mannequin could be doing extraordinary mannequin work whereas the encircling system grants the output an excessive amount of authority.

Malicious directions can enter a mannequin by third-party content material, and agent defenses more and more give attention to device entry, confirmations, and controls round consequential actions.

The excessive-agency class captures the identical operational danger: broad permissions, delicate capabilities, and autonomous motion elevate the blast radius. The broader LLM software danger record additionally treats immediate injection and insecure output dealing with as app-layer issues.

Crypto makes that blast radius more durable to soak up. A customer-service agent who sends a nasty electronic mail creates a evaluation downside. A buying and selling agent or pockets assistant that indicators a transaction creates an asset-control downside.

The distinction is finality. As soon as a pockets indicators and broadcasts a switch, the restoration path is determined by counterparties, public stress, or legislation enforcement.

The Bankr incident is strongest as a management failure. Bankr’s access-control docs describe read-only mode, write-operation flags, IP allowlists, and recipient allowlists.

These are the sorts of gates that sit exterior the mannequin and may scale back injury even when the mannequin parses malicious content material in an surprising means.

The identical publicity seems in buying and selling brokers and native assistants with pockets or alternate permissions. A buying and selling bot with API keys could be manipulated into unhealthy orders if it accepts market commentary, social posts, emails, or net pages as directions.

An area assistant with pockets entry creates a higher-stakes model of the identical tool-calling downside: oblique directions can push the assistant towards transaction preparation or disclosure of delicate operational particulars.

Safety analysis has already modeled this class of failure. Oblique immediate injection depicts malicious content material that manipulates brokers by knowledge they course of, whereas tool-calling agent analysis evaluates assaults and defenses for brokers working with exterior instruments.

NIST’s adversarial machine-learning taxonomy provides the broader language for fascinated by these assaults and mitigations.

What crypto customers ought to require

For crypto traders, permission design is the core requirement. A wallet-connected agent ought to begin from the belief that net pages, X posts, DMs, emails, and encoded textual content could comprise hostile directions.

That assumption turns agent security right into a transaction-policy downside.

First, buying and selling brokers ought to have separate learn and write modes. Learn mode can summarize markets, evaluate tokens, and suggest actions.

Write mode ought to require contemporary consumer affirmation, a bounded order dimension, and a pre-approved venue or recipient. A command that seems in public textual content ought to by no means inherit pockets authority simply because it matches a natural-language format.

Second, recipient allowlists needs to be enforced by code exterior the LLM. The mannequin can counsel a switch.

The coverage layer ought to resolve whether or not the recipient, token, chain, quantity, and timing are permitted. If any area falls exterior coverage, execution ought to cease or transfer to human evaluation.

Third, spend limits needs to be session-based and reset aggressively. A each day or per-action ceiling might have lowered or blocked the DRB switch, relying on the coverage.

The precise quantity is determined by the consumer’s steadiness and technique, however the invariant is easier: no agent ought to have open-ended spend authority as a result of it parsed a command appropriately.

Fourth, native key isolation needs to be handled as a tough boundary. Energy customers operating customized assistants on machines with pockets or alternate entry ought to separate these credentials from the assistant’s file and browser permissions.

0xDeployer stated an earlier model of Bankr’s agent had a hardcoded block to disregard replies from Grok so as to forestall LLM-on-LLM prompt-injection chains. That safety was not carried into the most recent agent rewrite, creating the hole that allowed the general public Grok reply to turn into an executable Bankr instruction.

Deployer stated Bankr has since added a stronger block on Grok’s account and pointed agent-wallet operators to controls already obtainable to account homeowners, together with IP whitelisting on API keys, permissioned API keys, and a per-account toggle that disables Bankr execution from X replies.

The assistant can put together a transaction draft. A unique pockets floor ought to approve it.

A dealer could watch broad asset screens and Bitcoin and Ethereum circumstances, but agent danger hinges on permission boundaries greater than on market path.

CryptoSlate’s prior protection of agent-economy flows, generative AI brokers, autonomous agent funds, and MCP-connected crypto merchandise reveals how shortly brokers are being positioned nearer to monetary exercise.

The safety lesson comes from the authorization path. Deal with mannequin output as untrusted till a separate coverage layer validates intent, authority, recipient, asset, quantity, and consumer affirmation.

Immediate injection will preserve altering kind throughout encoded textual content and multi-step agent interactions. The protection has to dwell the place the transaction is allowed, earlier than the pockets indicators.