The IBD Course of

Synchronizing a brand new node to the community tip includes a number of distinct phases:

- Peer discovery and chain choice the place the node connects to random friends and determines the most-work chain.

- Header obtain when block headers are fetched and linked to kind the total header chain.

- Block obtain when the node requests blocks belonging to that chain from a number of friends concurrently.

- Block and transaction validation the place every block’s transactions are verified earlier than the following one is processed.

Whereas block validation itself is inherently sequential, every block is determined by the state produced by the earlier one, a lot of the encircling work runs in parallel. Header synchronization, block downloads and script verification can all happen concurrently on completely different threads. A great IBD saturates all subsystems maximally: community threads fetching knowledge, validation threads verifying signatures, and database threads writing the ensuing state.

With out steady efficiency enchancment, low-cost nodes may not be capable to be part of the community sooner or later.

Intro

Bitcoin’s “don’t belief, confirm” tradition requires that the ledger might be rebuilt by anybody from scratch. After processing all historic transactions each person ought to arrive at the very same native state of everybody’s funds as the remainder of the community.

This reproducibility is on the coronary heart of Bitcoin’s trust-minimized design, however it comes at a big value: after nearly 17 years, this ever-growing database forces newcomers to do extra work than ever earlier than they’ll be part of the Bitcoin community.

When bootstrapping a brand new node it has to obtain, confirm, and persist each block from genesis to the present chain tip – a resource-intensive synchronization course of known as Preliminary Block Obtain (IBD).

Whereas client {hardware} continues to enhance, maintaining IBD necessities low stays essential for sustaining decentralization by maintaining validation accessible to everybody – from lower-powered units like Raspberry Pis to high-powered servers.

Benchmarking course of

Efficiency optimization begins with understanding how software program elements, knowledge patterns, {hardware}, and community circumstances work together to create bottlenecks in efficiency. This requires in depth experimentation, most of which will get discarded. Past the same old balancing act between velocity, reminiscence utilization, and maintainability, Bitcoin Core builders should select the lowest-risk/highest-return modifications. Legitimate-but-minor optimizations are sometimes rejected as too dangerous relative to their profit.

We have now a big suite of micro-benchmarks to make sure present performance doesn’t degrade in efficiency. These are helpful for catching regressions, i.e. efficiency backslides in particular person items of code, however aren’t essentially consultant of general IBD efficiency.

Contributors proposing optimizations present reproducers and measurements throughout completely different environments: working programs, compilers, storage varieties (SSD vs HDD), community speeds, dbcache sizes, node configurations (pruned vs archival), and index mixtures. We write single-use benchmarks and use compiler explorers for validating which setup would carry out higher in that particular state of affairs (e.g. intra-block duplicate transaction checking with Hash Set vs Sorted Set vs Sorted vector).

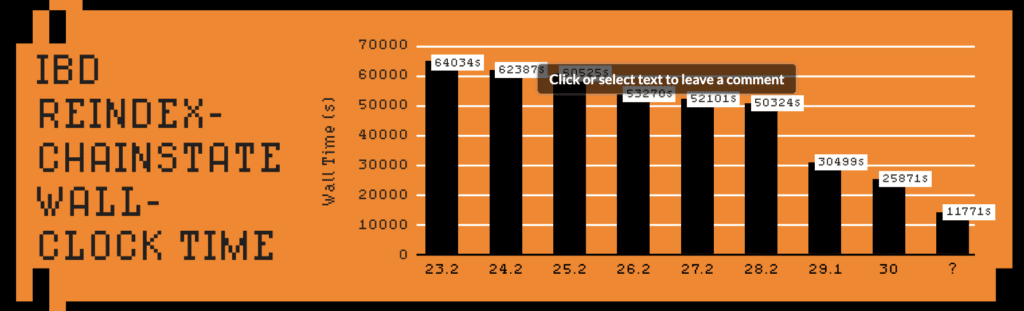

We’re additionally repeatedly benchmarking the IBD course of. This may be executed by reindexing the chainstate and optionally the block index from native block information, or doing a full IBD both from native friends (to keep away from sluggish friends affecting timings) or from the broader p2p community itself.

IBD benchmarks typically present smaller enhancements than micro-benchmarks since community bandwidth or different I/O is usually the bottleneck; downloading the blockchain alone takes ~16 hours with common international web speeds.

For max reproducibility -reindex-chainstate is usually favored, creating reminiscence and CPU profiles earlier than and after the optimization and validating how the change impacts different performance.

Historic and ongoing enhancements

Early Bitcoin Core variations have been designed for a a lot smaller blockchain. The unique Satoshi prototype laid the foundations, however with out fixed innovation from Bitcoin Core builders it could not have been capable of deal with the community’s unprecedented development.

Initially the block index saved each historic transaction and whether or not they have been spent, however in 2012, “Ultraprune” (PR #1677) created a devoted database for monitoring unspent transaction outputs, forming the UTXO set, which pre-caches the most recent state of all spendable cash, offering a unified view for validation. Mixed with a database migration from Berkeley DB to LevelDB validation speeds have been considerably improved.

Nonetheless, this database migration prompted the BIP50[1] chain fork when a block with many transaction inputs was accepted by upgraded nodes however rejected by older variations as being too difficult. This highlights how Bitcoin Core growth differs from typical software program engineering: even pure efficiency optimizations have the potential to end in unintended chain splits.

The next yr (PR #2060) enabled multithreaded signature validation. Across the similar time, the specialised cryptographic library libsecp256k1 was created, and was built-in into Bitcoin Core in 2014. Over the next decade, by way of steady optimizations, it turned greater than 8x sooner than the identical performance within the general-purpose OpenSSL library.

Headers-first sync (PR #4468, 2014) restructured the IBD course of to first obtain the block header chain with probably the most amassed work, then fetch blocks from a number of friends concurrently. Apart from accelerating IBD it additionally eradicated wasted bandwidth on blocks that may be orphaned as they weren’t in the primary chain.

In 2016 PR #9049 eliminated what seemed to be a redundant duplicate-input examine, introducing a consensus bug that would have allowed provide inflation. Fortuitously, it was found and patched earlier than exploitation. This incident drove main testing useful resource investments. At this time, with differential fuzzing, broad protection, and stricter evaluate self-discipline, Bitcoin Core surfaces and resolves points way more shortly, with no comparable consensus hazards reported since.[2].

In 2017 -assumevalid (PR #9484) separated common block validity checks from the costly signature verification, making the latter optionally available for many of IBD, reducing its time roughly in half. Block construction, proof-of-work, and spending guidelines stay totally verified: -assumevalid skips signature checks completely for all blocks as much as a sure block top.

In 2022 PR #25325 changed Bitcoin Core’s unusual reminiscence allocator with a customized pool-based allocator optimized for the cash cache. By designing particularly for Bitcoin’s allocation patterns, it decreased reminiscence waste and improved cache effectivity, delivering ~21% sooner IBD whereas becoming extra cash in the identical reminiscence footprint.

Whereas code itself doesn’t rot, the system it operates inside consistently evolves. Each 10 minutes Bitcoin’s state modifications – utilization patterns shift, bottlenecks migrate. Upkeep and optimization aren’t optionally available; with out fixed adaptation, Bitcoin would accumulate vulnerabilities sooner than a static codebase might defend in opposition to, and IBD efficiency would steadily regress regardless of advances in {hardware}.

The rising dimension of the UTXO set and development in common block weight exemplify this evolution. Duties that have been as soon as CPU-bound (like signature verification) are actually typically Enter/Output (IO)-bound resulting from heavier chainstate entry (having to examine the UTXO set on disk). This shift has pushed new priorities: enhancing reminiscence caching, lowering LevelDB flush frequency, and parallelizing disk reads to maintain fashionable multi-core CPUs busy.

Current optimizations

The software program designs are based mostly on predicted utilization patterns, which inevitably diverge from actuality because the community evolves. Bitcoin’s deterministic workload permits us to measure precise conduct and course appropriate later, guaranteeing efficiency retains tempo with the community’s development.

We’re consistently adjusting defaults to raised match real-world utilization patterns. A couple of examples:

- PR #30039 elevated LevelDB’s max file dimension – a single parameter change that delivered ~30% IBD speedup by higher matching how the chainstate database (UTXO set) is definitely accessed.

- PR #31645 doubled the flush batch dimension, lowering fragmented disk writes throughout IBD’s most write-intensive section and rushing up progress saves when IBD is interrupted.

- PR #32279 adjusted the interior prevector storage dimension (used primarily for in-memory script storage). The outdated pre-segwit threshold prioritized older script templates on the expense of newer ones. By adjusting the capability to cowl fashionable script sizes, heap allocations are prevented, reminiscence fragmentation is decreased, and script execution advantages from higher cache locality.

All small, surgical modifications with measurable validation impacts.

Past parameter tuning, some modifications required rethinking present designs:

- PR #28280 improved how pruned nodes (which discard outdated blocks to avoid wasting disk house) deal with frequent reminiscence cache flushes. The unique design both dumped your complete cache or scanned it to seek out modified entries. Selectively monitoring modified entries enabled over 30% speedup for pruned nodes with most dbcache and ~9% enchancment with default settings.

- PR #31551 launched learn/write batching for block information, lowering the overhead of many small filesystem operations. The 4x-8x speedup in block file entry improved not simply IBD however different RPCs as nicely.

- PR #31144 optimized the prevailing optionally available block file obfuscation (used to verify knowledge isn’t saved in cleartext on disk) by processing 64-bit chunks as a substitute of byte-by-byte operations, delivering one other IBD speedup. With obfuscation being primarily free customers now not want to decide on between secure storage and efficiency.

Different minor caching optimizations (comparable to PR #32487) enabled including extra security checks that have been deemed too costly earlier than (PR #32638).

Equally, we are able to now flush the cache extra regularly to disk (PR #30611), guaranteeing nodes by no means lose a couple of hour of validation work in case of crashes. The modest overhead was acceptable as a result of earlier optimizations had already made IBD considerably sooner.

PR #32043 at present serves as a tracker for IBD-related efficiency enhancements. It teams a dozen ongoing efforts, from disk and cache tuning to concurrency enhancements, and supplies a framework for measuring how every change impacts real-world efficiency. This strategy encourages contributors to current not solely code but additionally reproducible benchmarks, profiling knowledge, and cross-hardware comparisons.

Future optimization ideas

PR #31132 parallelizes transaction enter fetching throughout block validation. Presently, every enter is fetched from the UTXO set sequentially – cache misses require disk spherical journeys, creating an IO bottleneck. The PR introduces parallel fetching throughout a number of employee threads, reaching as much as ~30% sooner -reindex-chainstate (~10 hours on a Raspberry Pi 5 with 450MB dbcache). As a aspect impact, this narrows the efficiency hole between small and enormous -dbcache values, doubtlessly permitting nodes with modest reminiscence to sync almost as quick as high-memory configurations.

Apart from IBD, PR #26966 parallelizes block filter and transaction index building utilizing configurable employee threads.

Preserving the continued UTXO set compact is essential for node accessibility. PR #33817 experiments with lowering it barely by eradicating an optionally available LevelDB characteristic that may not be wanted for Bitcoin’s particular use case.

SwiftSync[3] is an experimental strategy leveraging our hindsight about historic blocks. Realizing the precise final result, we are able to categorize each encountered coin by its last state on the goal top: these nonetheless unspent (which we retailer) and people spent by that top (which we are able to ignore, merely verifying they seem in matching create/spend pairs wherever). Pre-generated hints encode this classification, permitting nodes to skip UTXO operations for short-lived cash completely.

Bitcoin Is Open To Anybody

Past artificial benchmarks, a current experiment[4] ran the SwiftSync prototype on an underclocked Raspberry Pi 5 powered by a battery pack over WiFi, finishing -reindex-chainstate of 888,888 blocks in 3h 14m. Measurements with equal configurations present a 250% full validation speedup[5] throughout current Bitcoin Core variations.

Years of amassed work translate to real affect: totally validating almost one million blocks can now be executed in lower than a day on low-cost {hardware}, sustaining accessibility regardless of steady blockchain development.

Self-sovereignty is extra accessible than ever.

Don’t miss your likelihood to personal The Core Difficulty — that includes articles written by many Core Builders explaining the initiatives they work on themselves!

This piece is the Letter from the Editor featured within the newest Print version of Bitcoin Journal, The Core Difficulty. We’re sharing it right here as an early take a look at the concepts explored all through the total problem.

[1] https://github.com/bitcoin/bips/blob/grasp/bip-0050.mediawiki

[2] https://en.bitcoin.it/wiki/Common_Vulnerabilities_and_Exposures

[3] https://delvingbitcoin.org/t/swiftsync-speeding-up-ibd-with-pre-generated-hints-poc/1562

[4] https://x.com/L0RINC/standing/1972062557835088347

[5] https://x.com/L0RINC/standing/1970918510248575358

All Pull Requests (PR) listed on this article might be appeared up by quantity right here: https://github.com/bitcoin/bitcoin/pulls